Recently it was reported that Amazon convened an internal “deep dive” after a string of outages disrupted its retail site, apparently caused by AI assisted coding tools. The meeting followed several highly visible failures and a growing recognition inside the company that safeguards around generative AI in production systems are inadequate.

It is an early glimpse of a broader problem that many organizations would prefer not to acknowledge: As AI is rushed into critical systems, it is introducing new failure modes faster than they can understand or control them.

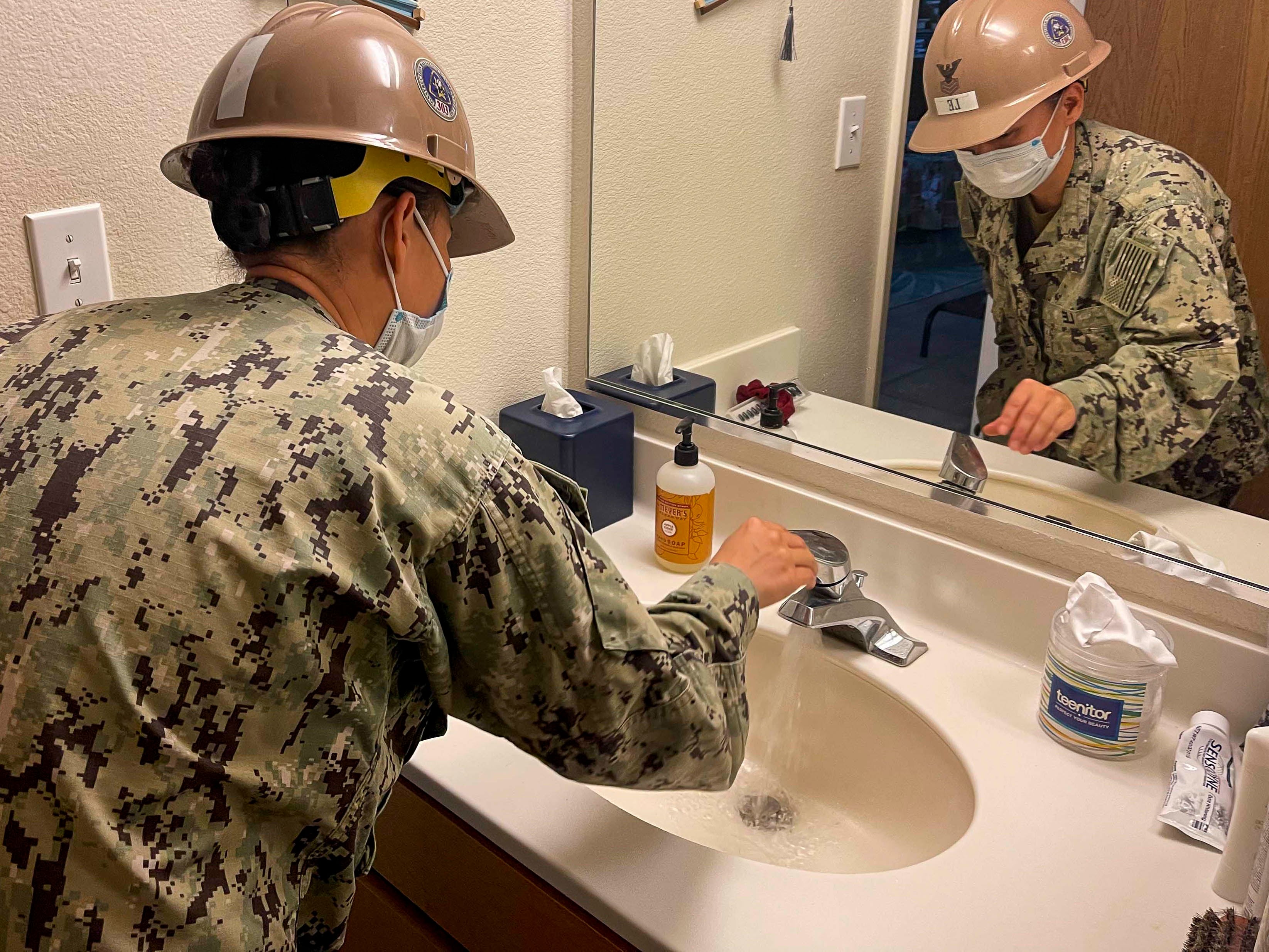

For defense organizations increasingly integrating AI into mission-critical systems, the implications are far more consequential.

When organizations pause to consider these risks at all, they often reach for a familiar reassurance: there will be a “human in the loop.” The idea is that even if the system is complex or unreliable, a person will catch mistakes before they matter.

This reassurance is dangerously misleading. A “human in the loop” whose sole function is to approve a machine’s actions is not a safeguard but a design failure. Attention wanes because nobody can concentrate on a job that is mostly doing nothing, and over time the operator’s skills atrophy to the point that they cannot meaningfully supervise the system. What remains is the appearance of oversight rather than the reality.

In military contexts, this kind of degraded human involvement is not just inefficient but operationally dangerous.

This pattern is not new. Engineers have seen it before, most famously in the Therac-25, a radiation therapy machine introduced in 1982. It combined the functions of two predecessor systems in a smaller, more convenient package, and its improved automation made it faster and easier to operate. Safety was “guaranteed” by the presence of a human operator who had to confirm actions – in effect, a “human in the loop.”

The system failed anyway. Patients began developing severe radiation burns. Hospitals dismissed the possibility of machine error, and the manufacturer insisted overdoses were impossible. Only after sustained investigation was it discovered that the machine contained multiple safety-critical software flaws. By then, six overdose accidents had occurred, three of them fatal.

The deeper problem was not just faulty code but faulty design. The machine frequently halted with poorly explained error messages, requiring operators to “press P to proceed” to continue treatment. Because these errors were common and rarely meaningful, operators became habituated to restarting the system dozens or hundreds of times a day. When real malfunctions occurred, the act of “operator confirmation” had already lost its meaning. In one case, an operator restarted the machine multiple times, unknowingly delivering repeated overdoses. The presence of a human operator did not prevent the failure; it normalized it.

Today, we are repeating this mistake. Computer scientists are rushing to incorporate poorly understood AI systems into safety-critical environments, and when concerns are raised they are often waved away with the same phrase: there will be a human in the loop. This assumption is now appearing in discussions of defense systems, from decision support to autonomous operations.

People will argue that AI is fundamentally different, and in one sense they are right. We have never before deployed systems whose behavior is explicitly probabilistic and nondeterministic in high-stakes environments. In defense contexts, where uncertainty compounds quickly and errors can cascade across systems, this is especially concerning. But AI is also not different in the ways that matter most. It is still software, embedded in larger systems composed of people, processes, and machines. It cannot act in the real world without that surrounding system, and those systems fail in ways that are already well understood. Engineers and operators have spent decades studying how complex, tightly coupled systems behave under pressure.

What we are seeing now is not a new class of failure but a familiar one, accelerated. The software industry is once again demonstrating an inability to learn from its own history. That would be unfortunate if we were only talking about Spotify recommendation algorithms. It becomes dangerous when these same patterns are introduced into the systems that organizations — and nations — depend on.

Recent Pentagon leaks suggest that AI systems may already be influencing where bombs land. In such environments, the illusion of human oversight is worse than no oversight at all. It creates confidence without control.

If we spend the next decade hiding unsafe systems behind the fig leaf of the “human in the loop,” the consequences will not be theoretical.

Mikey Dickerson was the founding administrator of the U.S. Digital Service and is a crisis engineer at Layer Aleph. He is a co-author of the forthcoming book Crisis Engineering.